Way Back Wednesday

From the Farming Smarter magazine archives - 2018

By Jennifer Blair

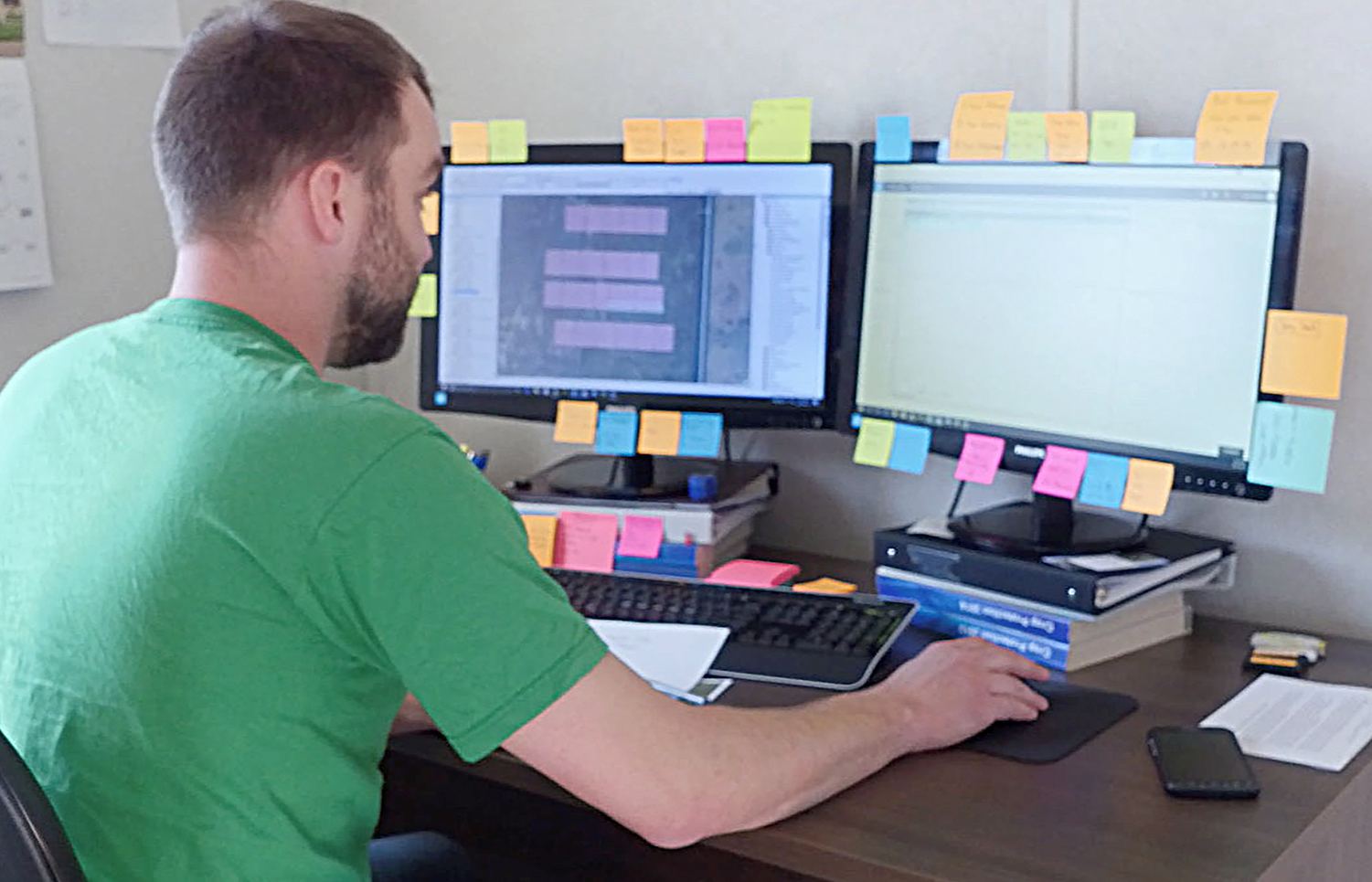

When it comes to ag, researchers do just about as much work in front of a computer as in the plots.

"There is a lot of boots-on-the-ground work in research, but setting up that trial in a way that helps us control variability and understand the results is as important as the field work," said Lewis Baarda, on-farm research manager at Farming Smarter.

"As researchers, our job is to dig through all the noise and see what's really going on. We do the best job that we can to isolate information out of the noise.

"It's essential to what we do." And much of that essential work is focussed on managing variability through statistics, he said.

Statistics - Lewis Baarda has his own system for dealing with complex sets of data.

Credit: Morton Molyneux

"Results can be confounding," said Baarda. "We see variable effects in variable environments, so we want to control that variability as much as possible. And statistics tell us how well we've done that."

Statistical Rigour

If, for instance, researchers see different results from the same treatment, statistics can help them understand whether the variability is because of a flaw in the experiment or some other random factor like subtle differences in the soil, ground slope, or precipitation levels.

"Statistical rigour helps us ensure that the results we see are real and that they're repeatable — that we can expect to see them somewhere else," said Baarda.

"We're not just coming up with a number. It didn't just happen because it was the right year or the right location or whatever random error might have happened during the experiments.

"It gives us a certain level of confidence in our results." And that starts with proper research design.

Research Design

"Two of the big facets of research design — particularly in small-plot research — is randomization and replication," said Baarda. "By randomizing the things that we test, there's less of a chance of systematic error and replication gives us confidence that what we see isn't just a random blip one year.

"It's a built-in mechanism to verify that those results are real." For instance, Farming Smarter might test four different herbicides at five different rates over eight different site years — "either eight different locations or the same location eight different times, or some combination of both."

"Site years is just another way to have the same experiment conducted in different environments, different weather conditions, and different locations," said Baarda.

"It's just like in your own field — you might do the same thing and have the same rotation every year, but in different years get different results.

"By replicating our trials and randomizing our treatments, we mitigate the effects of any errors or deviations on the final results." And statistics can help researchers parse out whether those final results are valid or not.

"Sometimes we see results and think, 'This isn't what we expected to happen.' But when we run the statistics, they might tell us that what we're seeing is actually happening, even if we didn't expect it," he said.

"Without the statistics, it's really tough to know whether the differences we see are because of the experiment or because of something else. "It motivates us to dig a little deeper and ask what we're missing or not understanding. That's how we learn things."

On-farm Tips

Producers can use those same principles, "The basic tenets of on-farm experimentation would be replication and randomization," he said. Producers often do a single check strip and cross their fingers that it will give them the answers they need.

"That may give you some qualitative data, but if this is something that you're going to make decisions on for your own operation in the future, you want to stick to the tenets of experimental science," said Baarda.

"You need to replicate things — have four check strips and make sure it's not just one check strip that happens to do better or worse than the rest of the field." And while statistical rigour is "just part of the process" at Farming Smarter, not all research projects follow the same standards, he added.

"As a producer, you need to be skeptical," said Baarda. "You want to ask those challenging questions. If somebody has some results, ask who did the research, what standards they adhere to, and how the trial was designed.

"There's a lot of pseudoscience out there and you want to be sure you're getting your information from a trusted source."